Scientists at University of Cambridge teach robot to taste as it cooks

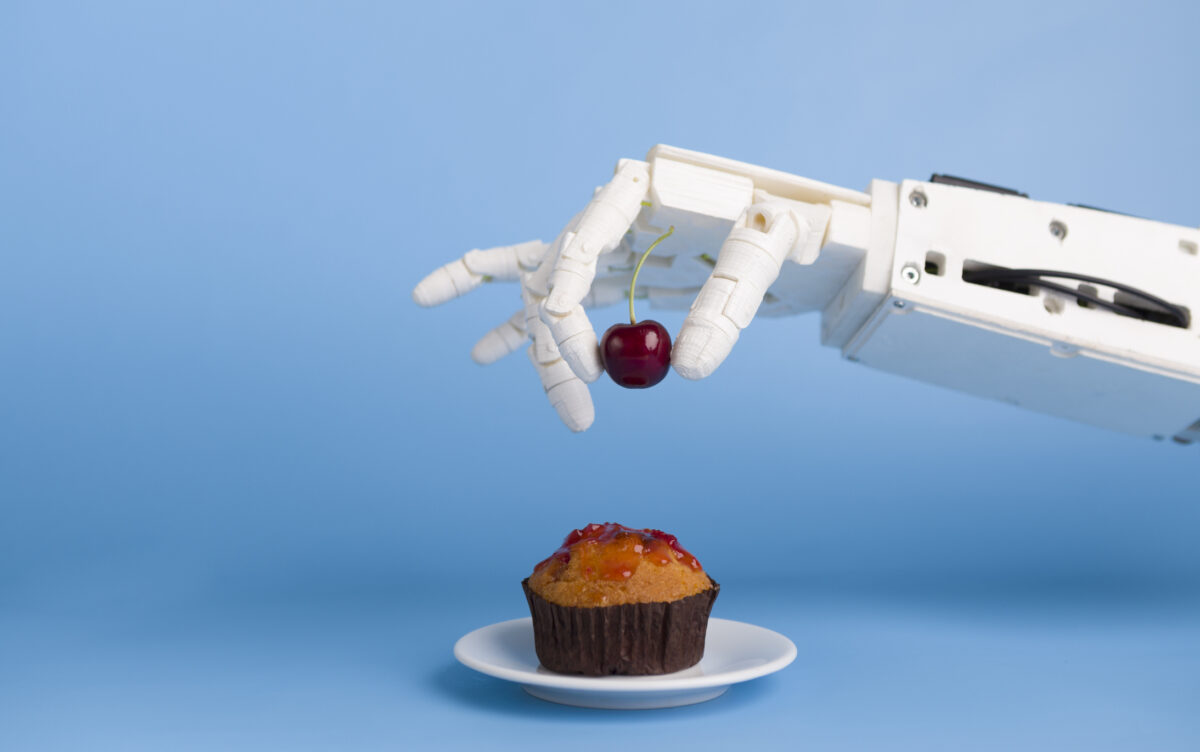

Researchers from the University of Cambridge have collaborated with British home appliance brand Beko to train a robot to taste the saltiness of food as it cooks.

During the experiment, researchers taught a robot to imitate the human process of chewing and tasting, which they believe could improve automated food preparation in the future.

The robot carried out the taste test on 9 varieties of scrambled eggs and tomatoes, at three different stages of chewing, and produced multiple visual ‘taste maps’ of the dish as it cooked.

A conductance probe, acting as a salinity sensor, was attached to a robot arm to imitate the human sense of taste. As the robot prepared the meal, it altered the number of tomatoes and level of salt, using the probe to ‘taste’ the dish throughout.

Beyond identifying and reacting to salt levels, being able to mimic the human process of chewing is important. This is because tastes change as we chew due to the release of saliva and digestive enzymes, which affect the texture and flavour of foods as they break down in our mouths.

To imitate the change in texture caused by chewing, the egg was put into a blender and then ‘tasted’ again by the robot with the probe.

Researchers found teaching a robot to follow a ‘taste as you go’ approach during cooking enabled it to taste the saltiness of a dish more accurately, compared to other electronic tasting technologies which in the past have only tested one uniform sample.

“Most home cooks will be familiar with the concept of tasting as you go – checking a dish throughout the cooking process to check whether the balance of flavours is right,” said Grzegorz Sochacki from Cambridge’s Department of Engineering, who co-conducted the experiment and co-authored a paper on the results.

“If robots are to be used for certain aspects of food preparation, it’s important that they are able to ‘taste’ what they’re cooking.”

Co-author Dr Arsen Abdulali, also from the Department of Engineering, added: “When we taste, the process of chewing also provides continuous feedback to our brains.

“Current methods of electronic testing only take a single snapshot from a homogenised sample, so we wanted to replicate a more realistic process of chewing and tasting in a robotic system, which should result in a tastier end product.”

The scientists are members of the Cambridge Bio-Inspired Robotics Laboratory, which has been working to train robots to perform “last metre problems which humans find easy”, such as cooking.

Before the ‘taste as you go’ training, the same robot was taught to make an omelette using feedback from human tasters.

“When a robot is learning how to cook, like any other cook, it needs indications of how well it did,” said Abdulali. “We want the robots to understand the concept of taste, which will make them better cooks. In our experiment, the robot can ‘see’ the difference in the food as it’s chewed, which improves its ability to taste.”

In the coming years the scientists want to improve the robot even further so it can prepare food that can be altered to suit individual tastes.

It is also hoped this technology will appeal for use in the home environment, according to Dr Muhammad W. Chughtai, Senior Scientist at Beko plc. “We believe that the development of robotic chefs will play a major role in busy households and assisted living homes in the future. This result is a leap forward in robotic cooking, and by using machine and deep learning algorithms, mastication will help robot chefs adjust taste for different dishes and users.”

The world’s first robot chef designed for use at home and in restaurants was launched earlier this year by Moley Robotics.

Other recent technological inventions working with taste also include a pair of chopsticks that can imitate the taste of salt, which a team of Japanese scientists created with the aim to reduce the population’s sodium intake.

The results of the University of Cambridge robot taste experiment have been published in the scientific journal, Frontiers in Robotics & AI.